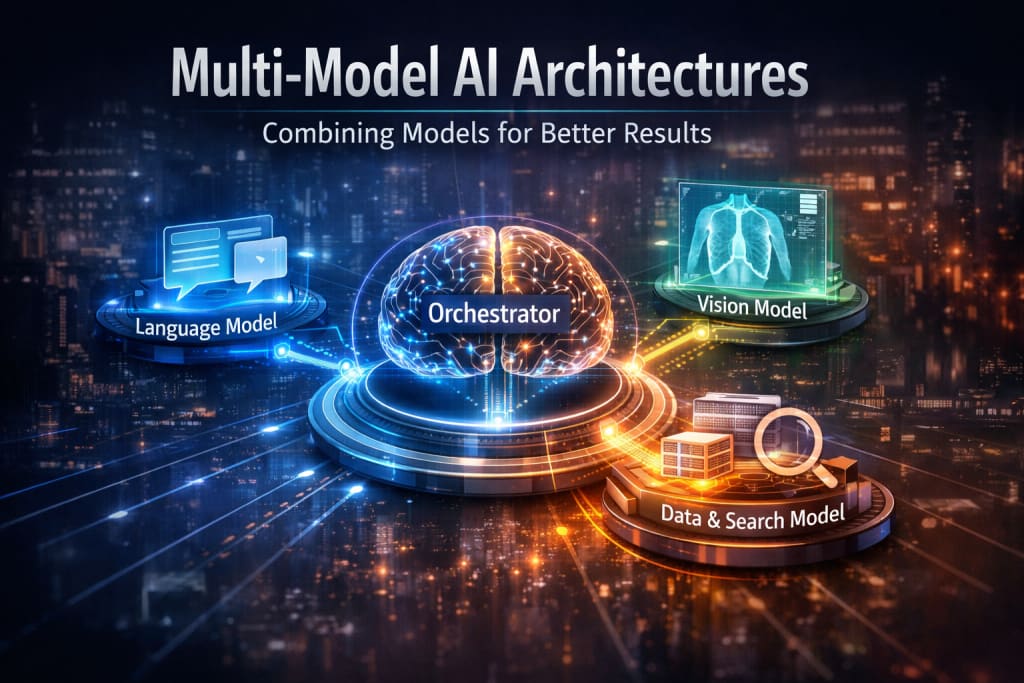

Multi-Model AI Architectures: Combining Models for Better Results

Why the Best AI Results Come from Teams, Not Soloists

For a time, the tech world has been trying to build bigger and bigger AI models. The idea was simple: if you want a system, just put more data and more parts into one big model. Yes, these big models are pretty cool. They can write poetry, answer questions, and generate code all at the same time.

But if you've spent any time using them, you've probably noticed their flaws. They sound confident even when they're wrong. They struggle with anything that happens after their training data is cut off. And sometimes, they just feel... shallow.

That's because there's a ceiling to what one model can do. So, a different approach is taking hold. Instead of building one model to rule them all, developers are starting to build systems that use multiple models, each doing what it does best.

The Problem with One Giant Model

Consider the experience of the human world. You would never ask your dentist to repair your car, and you would never ask a cook to represent you in court. People specialize. We seek professionals since they are well-informed in a given field.

The reverse is monolithic AI models. They're generalists. They've read a bit about everything; thus, they can talk about any old thing. However, since they have to travel such a long distance, they lack the in-depth knowledge of a specialty.

They also have a memory problem. Most of these models were trained months or even years ago. If you ask them about something recent, they either make something up or just tell you they don't know. Updating them is a huge project. It takes weeks, costs millions, and requires retraining the entire thing from scratch.

How Multi-Model Systems Work

The multi-model method reverses the scenario. You do not expect one model to excel at all things and instead, you construct a mechanism in which various models deal with various aspects of a problem.

The following is how easy it is to visualize. Think about such a query as to a smart home assistant: "Depending on my calendar and traffic, what time should I leave for my meeting?

In a multi-model system, that request doesn't go to one giant brain. It goes to a kind of "orchestrator." That orchestrator breaks it down. It sends one request to your calendar model to pull the meeting time and location. It sends another request to a traffic API to check the morning commute. Then, it takes that raw data and hands it to a language model whose only job is to turn information into a clear sentence. Finally, it speaks the answer back to you.

All the models fulfilled a single job, and a combination of them allowed reaching a result that would not have been possible by one model.

Why This Approach Works Better

This isn't just about being clever. It solves some very real headaches.

First, you get better answers. When you route a task to a model that was specifically trained for it, the quality goes up. A model built for medical research will catch nuances that a general chatbot would miss.

Second, you cut down on the nonsense. A lot of the "hallucinations" in AI happen because models are forced to guess. In a multi-model setup, you can include a model whose job is to look things up in a live database. The creative model just has to summarize what the search model found. It stops guessing and starts citing.

Third, it is more viable to operate. Why power up a huge, energy-sustaining model to get answers to the question What's the weather? A little, cheaply made model can take the simple stuff and leave the heavy stuff to come later when it is really required. It implies increased speed and reduced expenses.

And lastly, it is less difficult to maintain. When a superior model of translation has been developed, you simply replace the older version. You update the retrieval model in case your knowledge base requires updating. You do not need to recreate the entire universe.

Where You See This in the Real World

This isn't future tech. It's already running in the background of the tools you might use.

- Company Chatbots: When you ask an internal company bot a question, it usually isn't just guessing. A search model first digs through company documents to find the relevant facts. Then a language model turns those facts into a clear answer. That's why it can cite its sources.

- Medical Tools: Some diagnostic tools look at both images and text. One model might analyze an X-ray using advanced computer vision solutions, while another reads the patient's medical history. The system then presents a combined view to the doctor, helping them make faster and more informed decisions.

- Coding Assistants: In an AI-written code, a model may be running in the background that verifies that code as having errors or security issues. Get the code, and a hasty perusal at the same time.

Moving Forward

The future of AI is probably not one all-knowing machine. It is more likely to be a group of focused tools working together, much like autonomous agentic systems, where specialized AI agents coordinate to solve complex tasks. By letting models specialize and collaborate, we get systems that are more reliable, easier to update, and ultimately more useful for the complicated problems we need help with.

About the Creator

Eric Weston

Eric Weston is an AI Model Optimization Strategist dedicated to enhancing the efficiency, speed, and precision of LLMs and machine learning models.

Comments

There are no comments for this story

Be the first to respond and start the conversation.