Meta Preparing to Deploy Four New Homegrown Chips to Handle AI

The tech giant accelerates its chip development strategy with four new in‑house processors aimed at powering artificial intelligence workloads — a major step toward hardware independence and AI‑driven innovation.

In a bold move that underscores the increasing importance of artificial intelligence, Meta Platforms is preparing to deploy four new homegrown chips designed specifically to handle AI workloads. The announcement represents a key milestone in Meta’s efforts to build proprietary hardware capable of powering its expansive suite of AI tools, social platforms, and virtual‑reality ambitions.

This strategic shift highlights Meta’s determination to reduce its reliance on outside chipmakers like Nvidia and AMD, while also securing performance advantages that can only be achieved through tailor‑made silicon.

Why Meta Is Building Its Own Chips

Meta’s pivot toward in‑house silicon is driven by several key factors:

1. AI Performance Demands

Modern AI workloads — from large language models to real‑time image and video processing — require staggering amounts of compute power. Traditional CPUs and GPUs, while powerful, can struggle with the sheer volume and complexity of these tasks.

Custom AI chips allow Meta to optimize architecture for its specific requirements, reducing latency, increasing throughput, and improving energy efficiency.

Internal documents and industry analysts indicate that Meta’s AI infrastructure will rely heavily on these chips to run models across platforms like Facebook, Instagram, WhatsApp, and the company’s immersive initiatives in virtual reality and the metaverse.

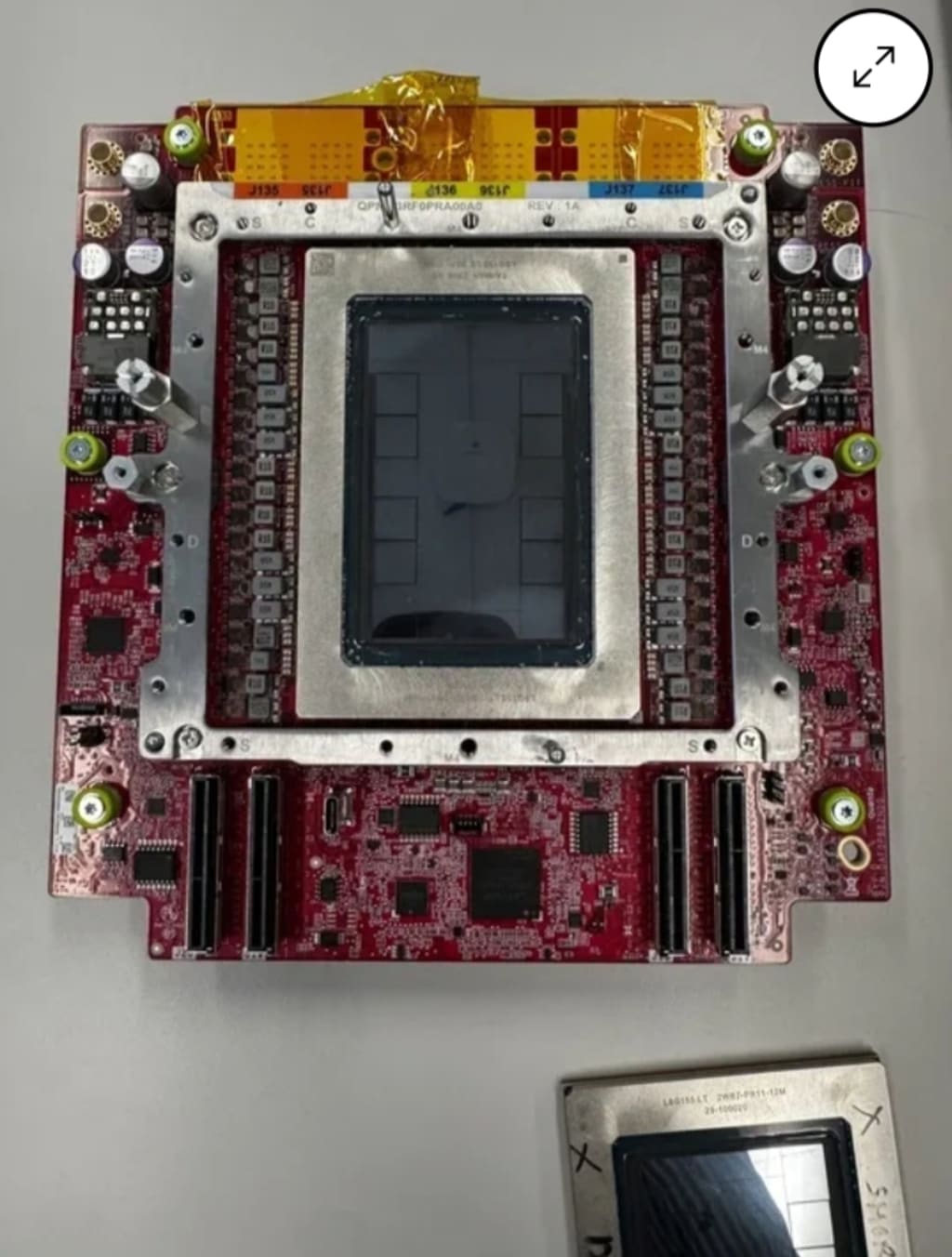

The Four New Chips: What We Know

Meta has not publicly disclosed detailed specifications for its upcoming chips, but industry insiders and job listings suggest the suite will include four distinct processors, each tailored for specific AI applications:

1. AI Training Chip

Designed to accelerate the training of large neural networks, this processor will focus on matrix multiplication and high‑volume data throughput — essential for teaching AI systems from massive datasets.

2. AI Inference Chip

Inference — running trained models to make predictions — is a different workload from training. Meta’s inference chip is expected to be more energy‑efficient, optimized for real‑time responses, and scalable across data centers.

3. Edge AI Chip

With an increasing push for on‑device AI, the edge chip will likely power AI tasks directly on user devices, such as AR/VR headsets or mobile apps, reducing reliance on data center compute and improving responsiveness while preserving privacy.

4. Data‑Center Accelerator

This chip will serve as a workhorse inside Meta’s data centers, handling bulk AI workloads that don’t fit neatly into training or inference categories but still demand specialized hardware.

A Competitive Silicon Landscape

Meta’s ambitious plan places it in direct competition with established players in the AI hardware space. Nvidia’s GPUs currently dominate data‑center AI workloads, while Google’s Tensor Processing Units (TPUs) and Apple’s custom silicon lead in other niches.

But Meta’s approach has advantages:

Tailored architecture: By designing chips specifically for its AI stack, Meta can optimize performance per watt far beyond what off‑the‑shelf solutions offer.

Cost control: Owning its silicon roadmap helps insulate Meta from price volatility and supply chain bottlenecks that have plagued the industry.

Strategic independence: In an era where chip supply — and geopolitics — intersect, having proprietary hardware reduces risk from external dependencies.

The Strategic Roadmap

Deploying four distinct chips signals that Meta is thinking holistically about where and how AI workloads are distributed across its infrastructure.

Initially, the new silicon will likely be used internally — powering everything from AI research to background model serving for consumer features. Over time, these chips could also become part of Meta’s broader platform offerings, including AI‑as‑a‑service tools for developers or partners.

Meta has already invested heavily in AI research through generative AI, content understanding, and immersive experiences. The introduction of in‑house chips is expected to accelerate product development and give the company more control over performance and costs.

What This Means for Meta’s Products

The impact of Meta’s chip strategy will likely be felt across multiple areas:

Social Media Platforms

Faster and more efficient AI processors could enable more advanced recommendation systems, better content moderation tools, and more immersive media features on Facebook, Instagram, and WhatsApp.

AR/VR and the Metaverse

Meta’s long‑term vision hinges on virtual and augmented reality experiences. Powerful on‑device and edge AI chips are crucial for real‑time processing required in virtual environments, where latency can make or break the user experience.

AI Tools and Services

As Meta grows its portfolio of AI tools — including creative assistants, automated insights platforms, and enterprise features — in‑house chips could unlock performance improvements that make these tools more competitive.

Balancing Innovation With Public Scrutiny

Meta’s chip development doesn’t occur in a vacuum. The company has faced ongoing regulatory scrutiny in areas ranging from data use to competition and privacy practices. Its push into proprietary hardware adds another layer to these discussions.

Critics argue that controlling both software and hardware could further entrench Meta’s influence in digital infrastructure, raising antitrust concerns. Supporters counter that owning the stack enables innovation and resilience, especially in AI where bottlenecks in compute can slow progress.

Whether regulatory bodies will scrutinize Meta’s silicon ambitions remains to be seen, but the company’s expanded hardware footprint is likely to attract attention from policymakers and industry watchdogs.

Challenges Ahead

Meta’s chip initiative is not without hurdles. Designing advanced silicon requires enormous investment, technical talent, and access to cutting‑edge fabrication technologies. The company will need to collaborate with chip manufacturers — such as Taiwan Semiconductor Manufacturing Company (TSMC) — to produce these processors at scale.

Additionally, entering an already competitive AI hardware space means Meta must deliver performance gains that justify its investment, especially when incumbents like Nvidia continue to innovate rapidly.

There are also software integration challenges: building custom hardware is only half the equation — the software stack must be tightly optimized to unlock full performance potential.

Industry Implications

Meta’s move highlights a broader trend: major technology companies are increasingly designing custom chips to power AI. From cloud providers to consumer device makers, controlling silicon is becoming a strategic imperative.

As AI workloads proliferate across industries, companies that can deliver high performance with lower energy costs stand to gain competitive advantage. Meta’s strategy underscores the value of owning both hardware and software, a lesson already embraced by tech firms such as Apple and Google.

Conclusion

Meta’s preparation to deploy four new in‑house AI chips represents a significant evolution in corporate strategy — one that could reshape not only the company’s internal infrastructure but also its product capabilities and competitive positioning.

By reducing dependence on external suppliers and tailoring hardware to meet specific AI needs, Meta is staking a claim in the high‑stakes silicon race that underpins modern computing.

As these chips begin to roll out, the broader tech world will be watching closely to see whether Meta’s hardware gamble pays off — both in performance gains and strategic advantage — in a rapidly changing AI landscape.

Comments

There are no comments for this story

Be the first to respond and start the conversation.