Adding AI to Your Mobile App: A Practical Guide

Find out how AI integration works in mobile apps across different industries.

Not every app needs machine learning. But if you’re building products that serve thousands of users with different habits and expectations, static logic eventually starts to crack. The same feed, the same suggestions, the same flows for everyone — it works early on, but it doesn’t scale.

Machine learning helps where fixed rules become unmanageable. Instead of anticipating every scenario upfront, you let the app respond to real behavior. That doesn’t make it “smart” overnight. It just makes it more adaptable over time. If you’re considering this path, working with experienced teams that specialize in AI mobile app development can save you from the most common implementation mistakes.

Where AI Actually Shows Up

Most teams don’t set out to build “AI features.” They start with a concrete problem. Recommendations are usually first, because manual sorting breaks down once catalogs grow. Search ranking follows — users expect relevant results without typing perfect queries. Moderation, automation, and fraud detection appear in products where scale makes manual review impractical.

The common thread is that all these problems share a structure: they involve patterns too messy to encode in rules. Machine learning handles that mess by example rather than instruction. Show it enough behavior, and it starts to generalize.

The Implementation Reality

Technically, integration is more constrained than people assume. Mobile devices have limited compute and battery. Models need to be small enough to run locally or fast enough to feel responsive over the network.

Apple’s Core ML and Google’s ML Kit handle the on-device cases. For heavier lifting, most teams keep inference on servers and update models independently of app releases. A common hybrid pattern: on-device models handle real-time needs like image recognition, while server-side models power recommendations and personalization that can tolerate a few hundred milliseconds of latency.

Data quality determines everything here. Models trained on noisy signals produce noisy outputs. The work isn’t glamorous — it’s cleaning logs, removing duplicates, and figuring out what actually correlates with user satisfaction. Skip this, and you’ll spend months chasing weird behaviors that looked fine in testing.

Where It Works

Retail apps benefit most visibly from recommendations and search ranking. Users find what they want faster, and basket sizes tend to increase. Behind the scenes, inventory and pricing models reduce stockouts and manual adjustments.

Health and fitness apps use on-device sensors to detect movement patterns, sleep quality, and activity. The value isn’t in raw numbers — it’s in turning them into insights people can act on. Privacy constraints here are tighter than almost anywhere else, which usually means more processing stays on the device. If you’re building in this space, you’ll quickly discover that healthcare mobile app development comes with a completely different set of expectations around data handling and regulatory compliance.

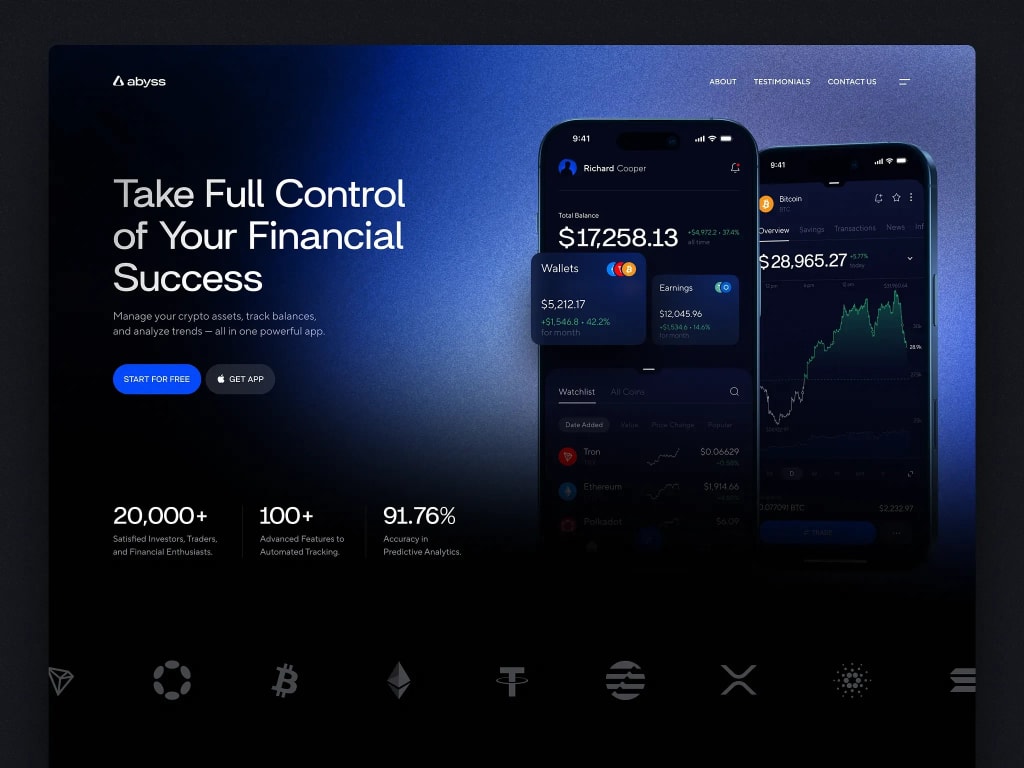

Fintech and productivity apps use prediction more subtly. Surfacing the right action at the right moment, flagging unusual patterns, automating repetitive inputs. The UI barely changes, but the experience tightens.

The Hard Parts

First, evaluation is tricky. Accuracy metrics matter less than user behavior. A model can score well on offline tests and still produce recommendations that feel random. You have to watch what people actually do after launch.

Second, teams overreach. Trying to automate everything usually backfires. Narrow, well-defined use cases age better than ambitious systems that try to handle too many scenarios. It’s better to have a simple recommender that works than a complex one that’s right 80% of the time and baffling the rest.

Third, infrastructure complexity adds up. Serving models, versioning them, monitoring drift — these aren’t insurmountable, but they’re real costs. Start with one model, prove it works, then expand.

Starting Small

If you’re considering this, pick one flow where adaptive logic would remove obvious friction. Instrument it, collect data, build something minimal, and watch what happens. Most successful AI integrations grew from these small beginnings — not grand plans, but targeted solutions to problems that manual effort couldn’t fix.

The technology matters less than the discipline around it. Clean data, clear success criteria, and willingness to kill features that don’t actually help.

Comments

There are no comments for this story

Be the first to respond and start the conversation.